Resources

Tools, tutorials and learning resources

Grok 4.3 Lands: How xAI's New Flagship Stacks Up Against Three Chinese Open-Source Models

xAI's Grok 4.3 (~0.5T params, $1.25/$2.50 per 1M tokens) goes head-to-head with DeepSeek V4 Flash, MiniMax M2.7, and MiMo 2.5. Benchmarks, pricing, and where each model actually wins.

Warp Goes Open-Source: The Day Code Stopped Being a Moat

Warp open-sources its terminal with OpenAI sponsorship. AI agents erode code as a competitive barrier — the new moat is community velocity.

Xiaomi MiMo V2.5 Pro: When a Model Learns to Work for 11 Hours Straight

MiMo V2.5 Pro built an 8,192-line video editor autonomously over 11.5 hours. The real differentiator isn't benchmark scores — it's the discipline to sustain structured work across a thousand-plus tool calls without human intervention.

DeepSeek V4 Ships Day-One Open Source on Huawei Chips: The Global AI Stack Split Begins

DeepSeek V4 launched day-one open source under MIT license, running on Huawei Ascend chips at a fraction of GPT-5.5 cost. The numbers behind a potential AI stack split.

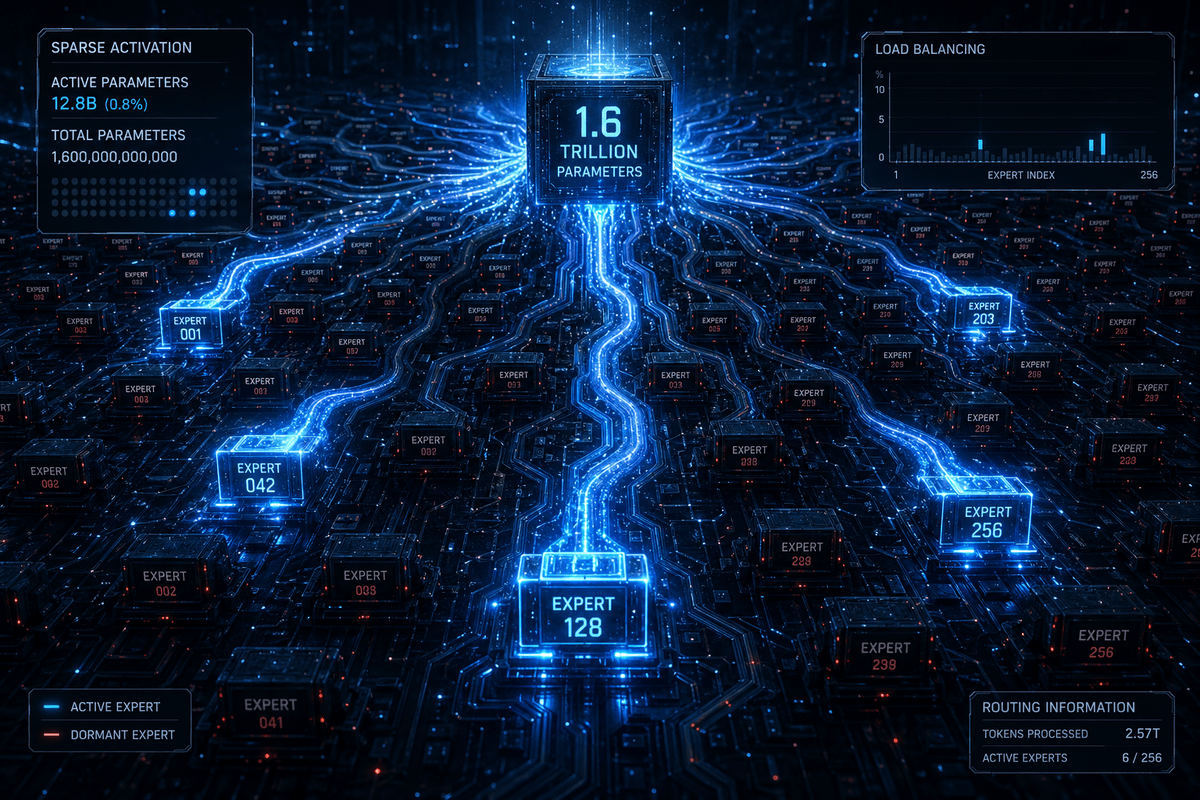

DeepSeek V4: What It Is, What It Isn't, and Why It Keeps Western AI Labs Up at Night

DeepSeek V4 Pro redefines the open-source AI ceiling with 1.6T parameters, 49B active, 1M token context, and MIT licensing.

LightRAG: The Graph-Based RAG Framework That Outperforms LangChain and GraphRAG

LightRAG, a graph-based RAG framework from HKU published at EMNLP 2025, achieves 34K+ GitHub stars by outperforming NaiveRAG, HyDE, and GraphRAG across multiple domains.

GPT-5.5 Arrives: OpenAI's New Agentic Model Hits 82.7% on Terminal-Bench 2.0

OpenAI releases GPT-5.5 with 82.7% on Terminal-Bench 2.0 and 93.6% on GPQA Diamond, setting new marks in agentic coding and scientific reasoning.

OpenAI Releases Privacy Filter: A 1.5B Open-Weight Model for PII Detection

OpenAI's new 50M-active-parameter model detects 8 categories of PII with 96% F1, available now as an open-weight download.