On April 24, 2026, DeepSeek released the V4 series. This time, it didn’t just bring more parameters—it brought an architectural package that forces Western AI labs to rethink their pricing strategies.

What DeepSeek V4 Is

1. Two Models, Two Missions

The V4 series ships in two variants:

- V4-Pro: 1.6 trillion total parameters, 49 billion activated per forward pass, flagship reasoning

- V4-Flash: 284 billion total parameters, 13 billion activated per forward pass, cost-optimized

Both support a 1 million token context window with up to 384K output tokens, released under the MIT license with weights on HuggingFace.

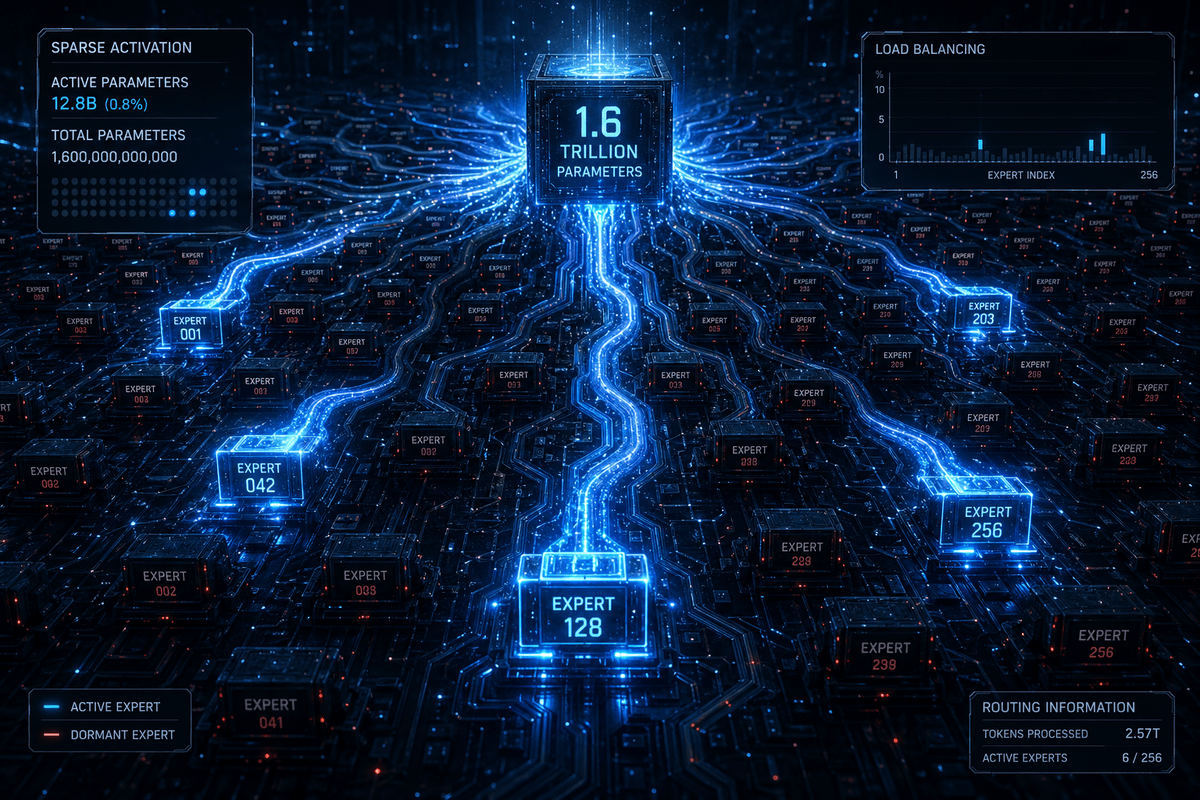

2. MoE Is Engineering, Not Marketing

DeepSeek V4 uses a Mixture-of-Experts (MoE) architecture. In plain terms: the model has 1.6 trillion parameters, but each token only activates about 3% (49B) of expert subnetworks. It’s like a company with 1,000 specialists where each project only pulls in the 30 most relevant people.

The direct consequence: inference costs match a 49B dense model, but capabilities approach a 1.6T dense model. API pricing sits around .10-0.30 per million tokens—roughly 1/50th of GPT-4.

3. Hybrid Attention: Engineering 1M Context

The million-token context isn’t a marketing number. DeepSeek introduced a dual-layer attention system:

- CSA (Compressed Sparse Attention): Compresses every m tokens’ KV cache into a single entry; query tokens attend only to top-k compressed entries. This delivers precise local context retrieval.

- HCA (Heavily Compressed Attention): Applies aggressive 128x compression, then runs dense attention over the compressed representation. This provides cheap global context awareness.

CSA and HCA alternate throughout the network—the model switches between focused retrieval and wide-angle perception. At 1M tokens, V4-Pro requires only 27% of V3.2’s FLOPs and 10% of its KV cache.

4. Dual-Mode Reasoning: Think / Non-Think

V4 offers two modes at the API level:

- Think mode: Multi-step reasoning, 8-15 second deliberation, suited for complex analysis and agentic workflows

- Non-Think mode: Sub-2-second response, ideal for content generation, summarization, and data extraction

This is more controllable than previous “Chain of Thought” prompting—developers explicitly choose reasoning depth per scenario.

What DeepSeek V4 Is Not

1. Not Another “GPT Killer”

Don’t reduce this to “Chinese model beats American model.” DeepSeek’s competitive edge isn’t a few benchmark points over GPT-5—it’s delivering equivalent capability at one-tenth the cost. This is a business model difference, not a pure technology race.

2. Not a Simple Dense Model Scale-Up

1.6 trillion parameters sounds scary, but this isn’t “brute force wins.” Deploying it as a 1.6T dense model would bankrupt you. V4’s value lies in proving that sparse activation + efficient routing can compress a trillion-parameter model’s inference cost into an acceptable range without sacrificing capability.

3. Not the End of RAG

A million-token context can swallow an entire codebase or long document in one pass, but that doesn’t mean RAG disappears. CSA/HCA is itself “retrieval-augmented attention”—it internally routes which tokens to focus on. RAG moved from external retrieval to internal routing. The form changed; the problem didn’t.

4. Not a Government Subsidy Product

V4 runs on Huawei Ascend chips and trains at a fraction of Western counterparts’ cost. This isn’t subsidies—it’s MoE architecture + FP8 training + algorithm-hardware co-design genuinely reducing compute requirements. DeepSeek-V3’s full training used only 2.788M H800 GPU hours with zero irrecoverable loss spikes or rollbacks.

What We Can Learn from DeepSeek V4

1. Architecture Innovation Beats Compute Brute Force

While OpenAI and Google buy more GPUs, DeepSeek did three things:

- Optimized attention: Made long context no longer a compute black hole

- Improved optimizers: Switched from AdamW to Muon for faster convergence and trillion-parameter training stability

- Designed routing: Taught the model which experts handle which tokens

None of these require more GPUs. They require deeper systems thinking.

2. Open Source Is Strategy, Not Charity

MIT license + HuggingFace weights + rock-bottom API pricing. This combo isn’t “doing good”—it’s rapidly capturing developer mindshare. When V4 becomes the default choice, toolchains, cloud services, and enterprise customizations form an ecosystem around it. Open source here is customer acquisition, not the endgame.

3. The “Good Enough” Inference Philosophy

V4-Flash activates only 13B parameters but handles most production scenarios adequately. This reveals an underappreciated trend: inference cost is replacing model capability as the biggest bottleneck for AI productization. When V4-Flash completes 80% of tasks at 1/50th of GPT-4’s price, the remaining 20% capability premium becomes a business question, not a technical one.

4. Attention Mechanisms Can Still Evolve

The CSA + HCA hybrid design shows that Transformer’s attention mechanism is far from exhausted. From dense attention → sparse attention → compressed attention, each compression challenges the assumption that “attention must be quadratic.” If you’re building AI infrastructure, this is a direction worth exploring deeply.

Final Thoughts

The most frightening thing about DeepSeek V4 isn’t its benchmark scores—it’s the proof that frontier AI capabilities can be delivered as open-source + low-cost. When GPT-5 might price at /million tokens, V4-Pro sells for .30. That gap isn’t a discount; it’s structural.

Western AI giants now face a choice: continue the closed-source premium route, or be forced into price wars with open-source competition. Either way, DeepSeek V4 has already changed the game.

References: DeepSeek-V4-Pro Technical Report, HuggingFace DeepSeek-V4-Pro, DataCamp DeepSeek V4 Analysis, MorphLLM DeepSeek V4 Guide