DeepSeek V4’s real shock isn’t that it’s another high-performance open-source model. It’s that it simultaneously validates three things: the most permissive open-source license, frontier training on non-NVIDIA hardware, and a cost structure that makes Western API pricing look like it belongs to a different era. On April 24, 2026, all three conditions were met123.

The models shipped day-one on HuggingFace under MIT — more permissive than Llama’s Apache 2.01. Training and inference run entirely on Huawei Ascend 950PR chips; NVIDIA and AMD received no pre-release optimization access2. API pricing starts at $0.14 per million input tokens for Flash, with the Pro version at $3.48 per million output tokens — roughly 1/8.6× the price of GPT-5.5 Pro3.

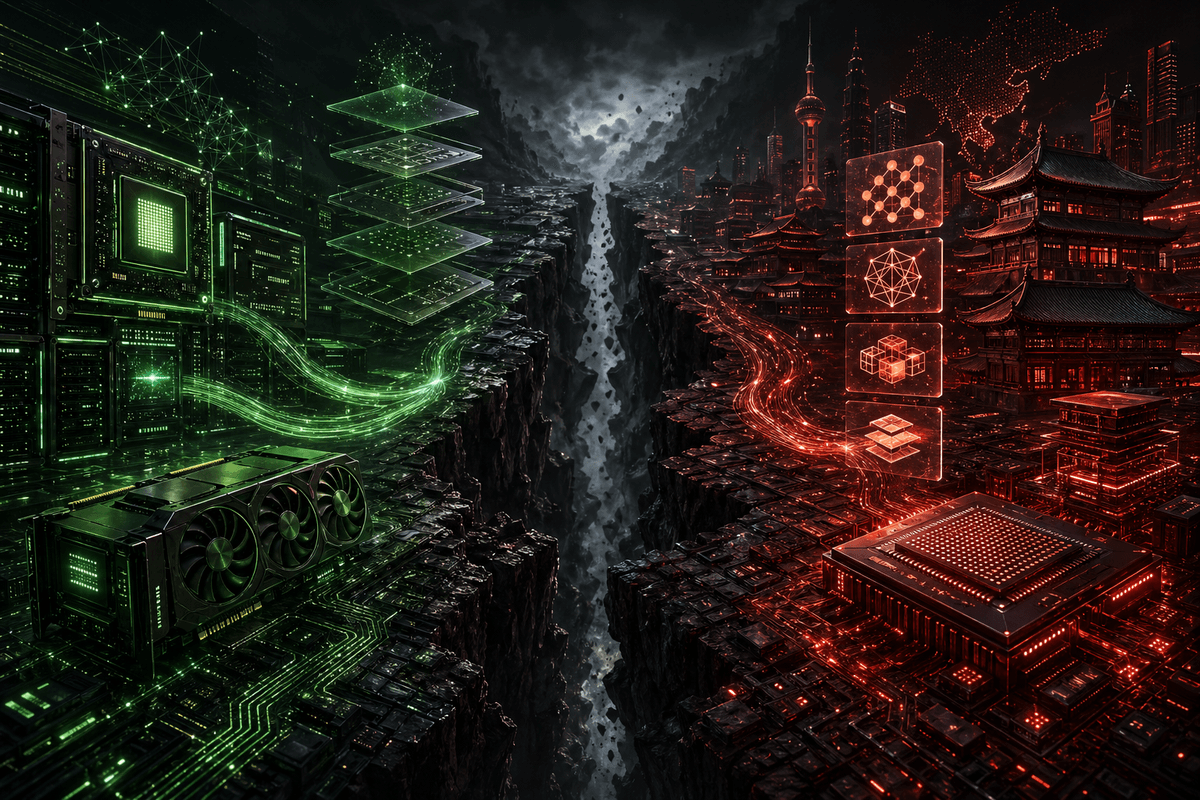

This is not a typical model release. It is a data point in the splitting of the global AI infrastructure stack.

Day-One Open Source: License as Strategy

V4 shipped with four checkpoints on HuggingFace on launch day1:

| Model | Total Params | Active Params | Context | Format |

|---|---|---|---|---|

| V4-Pro-Base | 1.6T | 49B | 1M | FP8 Mixed |

| V4-Pro (Instruct) | 1.6T | 49B | 1M | FP4 + FP8 |

| V4-Flash-Base | 284B | 13B | 1M | FP8 Mixed |

| V4-Flash (Instruct) | 284B | 13B | 1M | FP4 + FP8 |

The license is MIT — more permissive than Meta’s Llama or Mistral’s Apache 2.01. Developers can download the weights, fine-tune on proprietary data, and deploy commercially without notifying DeepSeek. No usage restrictions, no royalty obligations.

The download sizes tell you what deployment looks like: Flash at 160GB can run on a 128GB Mac Studio with quantization; Pro at 865GB requires a GPU cluster but is available through the API4.

Pricing vs. competing frontier models35:

| Model | Input ($/M tokens) | Output ($/M tokens) |

|---|---|---|

| DeepSeek V4-Flash | $0.14 | $0.28 |

| DeepSeek V4-Pro | $1.74 | $3.48 |

| GPT-5.5 Pro | $15.00 | $30.00 |

| Claude Opus 4.7 | $15.00 | $75.00 |

V4-Pro output is ~8.6× cheaper than GPT-5.5 Pro; V4-Flash is ~107× cheaper3. DeepSeek launched V4-Pro with a 75% first-week discount ($0.44 input / $0.87 output until May 5), while cutting cache-hit prices across the entire API to 1/10th of original levels6.

The intent is transparent: anchor usage habits with an irrefusable price during the developer evaluation window. Once workflows are built and integration costs sunk, the motivation to migrate back to premium APIs collapses even after the discount ends. This isn’t selling models — it’s buying ecosystem share.

How the License Compares to Other Chinese Labs

DeepSeek’s day-one MIT release stands out against recent Chinese model launches:

MiniMax M2.7 launched its API on March 18, 2026, but didn’t release weights until April 12 — 25 days later7. Worse, the license is explicitly non-commercial: MIT-style terms for non-commercial use, but commercial deployment requires written authorization from MiniMax7. MiniMax’s earlier models (M2, M2.5) shipped under MIT or Modified-MIT, making M2.7 a clear regression. The HuggingFace community thread hit hundreds of critical comments within days7.

GLM-5.1 from Z.ai (Zhipu) launched its API on March 27 and released weights on April 7 — about 11 days later8. The license is MIT, which is good. But the delay matters: in those 11 days, developers evaluating the model had to use the API without the option to self-host. GLM-5 had a similar pattern — API on February 11, weights about two weeks later8.

Table: Open-Weight Release Comparison

| Model | API Launch | Weights Released | Delay | License |

|---|---|---|---|---|

| DeepSeek V4 | Apr 24, 2026 | Apr 24, 2026 | 0 days | MIT |

| GLM-5.1 | Mar 27, 2026 | Apr 7, 2026 | 11 days | MIT |

| MiniMax M2.7 | Mar 18, 2026 | Apr 12, 2026 | 25 days | Non-commercial |

Day-one open source isn’t a gesture — it’s a strategy. In an AI infrastructure race where switching costs compound with every API call, the window between “API is available” and “weights are downloadable” is the platform lock-in window. DeepSeek compressed that window to zero — no hesitation period, no lock-in opportunity.

The cost of delay is real: GLM-5.1’s 11-day gap forced evaluators to test via API only. MiniMax M2.7’s 25-day delay combined with a non-commercial license triggered hundreds of critical comments on HuggingFace7. DeepSeek chose a more aggressive path: trade licensing permissiveness for ecosystem adoption speed. This isn’t altruism — it’s the most efficient way to compete for developer attention and deployment habits in an oversupplied open-source model market.

The Huawei Ascend Bet: From Experiment to Shipment

DeepSeek V4 runs entirely on Huawei hardware: Ascend 950PR chips for both training and inference2. The lab rejected pre-release optimization access from NVIDIA and AMD, giving Huawei and Cambricon weeks of early access instead9.

This wasn’t the first attempt. In early 2025, DeepSeek tried training its R2 reasoning model on Ascend 910C. Those runs failed repeatedly: inter-chip communication latency caused synchronization failures, memory consistency errors corrupted training progress10. DeepSeek abandoned Ascend for R2 and reverted to NVIDIA GPUs, relegating Huawei hardware to inference only10.

V4 is the second attempt. It succeeded. The difference isn’t just how much the hardware improved — it’s that DeepSeek proved, under existing export controls, non-NVIDIA hardware can now support the full lifecycle of a frontier model.

Ascend 950PR vs. NVIDIA hardware211:

| Metric | Ascend 950PR | NVIDIA H20 | NVIDIA H100 | NVIDIA B200 |

|---|---|---|---|---|

| Performance (relative) | 2.8× H20 | Baseline | — | — |

| vs H100 inference | ~60% | — | Baseline | >2× H100 |

| Unit price | ~$6,900 | Restricted export | $25,000+ | $30,000+ |

| 2026 planned output | 750,000 units | Export limited | — | — |

The Ascend 950PR is roughly 60% of H100 inference performance11. That’s not parity, but it’s viable — especially combined with V4’s sparse architecture that only activates 3% of parameters per token.

The software stack is Huawei’s CANN (Compute Architecture for Neural Networks), a CUDA alternative. CANN’s maturity gap is real: R2’s training failures came from CANN’s distributed training limitations, not hardware defects10. V4’s success suggests the gap narrowed between early 2025 and early 2026. The result: a frontier model trained and served without NVIDIA.

Architecture: How Efficiency Funded the Hardware Gap

V4 retains the DeepSeekMoE framework from V3, but makes three structural changes1:

1. Hybrid Attention (CSA + HCA)

V4 replaces standard attention with two alternating mechanisms:

- CSA (Compressed Sparse Attention): Compresses KV caches so each query only attends to top-k compressed entries. Provides local, detailed context.

- HCA (Heavily Compressed Attention): Aggressive 128× compression, then dense attention over the compressed representation. Provides global, approximate context.

CSA and HCA interleave through the network. At 1M-token context, V4-Pro requires only 27% of V3.2’s single-token inference FLOPs and 10% of its KV cache1. These aren’t rounding differences — they’re structural efficiency gains.

2. Muon Optimizer

V4 switches most parameters from AdamW to Muon, reporting faster convergence and better stability at trillion-parameter scale1. AdamW is retained only for embeddings, the prediction head, and RMSNorm weights. Peak learning rate: 2.0e-4 with cosine decay.

3. Manifold-Constrained Hyper-Connections (mHC)

Standard residual connections are replaced with mHC, which projects residual signals onto manifolds to stabilize signal propagation in very deep networks1.

V4 vs. V3.2 at 1M-token context1:

| Metric | V3.2 | V4-Pro | Improvement |

|---|---|---|---|

| Per-token inference FLOPs | 100% | 27% | 73% reduction |

| KV cache memory | 100% | 10% | 90% reduction |

| Total parameters | 671B | 1.6T | 2.4× larger |

| Active parameters | 37B | 49B | 1.3× more |

The counterintuitive result: V4 is 2.4× larger but uses 73% less compute per token at full context length. This efficiency isn’t an academic curiosity — it’s the precondition for hardware decoupling. Without the 73% FLOPs reduction, the Ascend 950PR — at roughly 60% of H100 inference performance — could not sustain production deployment of a 1.6T-parameter model. Architecture paid for the hardware gap.

What This Means for NVIDIA

Stock and Market Reaction

On V4’s release day, NVIDIA stock dropped 1.41%12. Meanwhile, Chinese chip stocks surged: SMIC rose 9.4% in Hong Kong trading, Hua Hong Semiconductor gained 13%+13.

If export controls disappeared tomorrow, Chinese enterprises would buy approximately 1.5 million H200 units this year — roughly $30 billion in potential NVIDIA revenue12. China’s AI infrastructure market is $50 billion annually, growing 50% per year12. The implication: that money won’t disappear as export controls tighten — it will flow to domestic alternatives.

Huang’s Concern: Not Volume, Trajectory

NVIDIA CEO Jensen Huang warned on the Dwarkesh Podcast before V4’s release that DeepSeek optimizing for Huawei chips would be “a horrible outcome” for the United States14. The logic isn’t about Huawei’s current output — Ascend’s 2026 total capacity equals roughly 3-5% of NVIDIA’s total compute14 — but about the path dependency of stack migration.

The core of path dependency: every time a frontier model like V4 ships on Ascend, the migration cost drops for the next Chinese lab. Every CANN improvement smooths the CUDA-to-CANN learning curve. ByteDance and Alibaba received 950PR samples in January 2026 and have been running benchmarks in production environments for two months15. This isn’t academic curiosity — it’s pre-deployment validation.

NVIDIA’s Defense: Speed vs. Cost

NVIDIA responded with Day-0 Blackwell support, claiming 3,500 tokens/second on the 1.6T model using NVFP416. The implicit message: “You can run on Huawei, but it’s faster here.”

The pricing table tells a different story. For Flash-class workloads, the NVIDIA solution costs 35× more. In production environments where inference cost dominates AI opex, “faster” only commands a premium in latency-sensitive scenarios. For the majority of batch processing, content generation, and backend inference tasks, Ascend’s performance is sufficient — and sufficient is competitive.

He’s not worried about today. Huawei’s AI chip production in 2026 represents roughly 3-5% of NVIDIA’s aggregate computing power14. The Ascend 950PR achieves approximately 89% of H100-equivalent throughput at 40% lower power on specific MLPerf workloads17, but in large-scale distributed training it falls considerably short.

The concern is the trajectory. Every time a model like V4 ships on Ascend, the migration cost for the next Chinese lab drops. Every CANN improvement shrinks the switching cost from CUDA. ByteDance and Alibaba received 950PR samples in January 2026 and have been running production benchmarks on them for two months15.

NVIDIA Responds: Day-0 Blackwell

NVIDIA launched Day-0 Blackwell support for V4 on launch day, claiming 3,500 tokens/second on 1.6T models using NVFP416. The message: “You can run on Huawei, but it’s faster here.”

The counter-message is in the pricing table above. “Faster” costs 35× more for Flash-level workloads. For most production use cases, that trade-off isn’t worth it.

The Real Risk: Ecosystem Fork

The deeper issue isn’t chip sales — it’s software dependency. 4 million+ developers have built on CUDA. Models, frameworks, kernels, deployment pipelines assume NVIDIA hardware18. This is a moat built from billions of dollars and tens of millions of developer-hours.

V4’s significance is that it proves this moat can be bypassed. If Chinese labs continue shipping frontier models on Ascend + CANN + MindSpore + MindIE, global AI infrastructure splits into two parallel ecosystems. Western companies stay on CUDA. Chinese companies build on CANN. Every fork deepens, and the cost of re-convergence compounds with each iteration.

V4 doesn’t prove the fork is irreversible. It proves the fork has already begun.

The Splitting Point

DeepSeek V4 won’t kill NVIDIA — this barely requires argument. The Ascend 950PR–Blackwell performance gap is real, and the CANN–CUDA ecosystem maturity gap will take years to close — if it closes at all. The vast majority of global AI training and inference workloads still run on NVIDIA hardware, and that won’t change in the near term.

But V4 changes the nature of the question. Before this, “can a non-US AI stack work?” was a theoretical question. After V4, it’s a product question: in which use cases is it good enough, in which is it not, and is the gap between the two widening or narrowing?

A frontier model built entirely without NVIDIA hardware, released under the most permissive open-source license, priced as if Western API economics never happened — these three conditions met simultaneously means the full non-US AI stack is a working commercial product, not a research concept. Whether that stack achieves efficiency and maturity parity with the US stack by 2027 or 2028 depends on CANN iteration speed and Chinese chip production ramp rates.

But the deeper question is: even if the performance gap persists, is the global AI market large enough to sustain two parallel ecosystems? Historical precedent — from x86 vs. ARM to iOS vs. Android — suggests technical divergences can persist indefinitely when the market is big enough. The AI infrastructure market is clearly sufficient. That means the fork V4 marks may not be a temporary deviation — it may be the beginning of a structural reorganization.

The variable to watch isn’t DeepSeek’s next model. It’s the follow-on speed of other Chinese labs. When ByteDance, Alibaba, and Zhipu ship flagship models on Ascend, the fork transforms from a case study to a trend. That inflection point may matter more than V4 itself.

References

Footnotes

-

DeepSeek-V4 Technical Report — Architecture details, CSA/HCA attention, Muon optimizer, mHC connections, benchmark results: https://huggingface.co/deepseek-ai/DeepSeek-V4-Pro/resolve/main/DeepSeek_V4.pdf ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7 ↩8 ↩9

-

The Information — DeepSeek withheld V4 from NVIDIA/AMD for optimization, gave Huawei early access: https://www.theinformation.com/ ↩ ↩2 ↩3 ↩4

-

Context Studios — “DeepSeek V4: The Open Source Pricing Earthquake,” pricing analysis vs GPT-5.5 Pro and Claude Opus 4.7: https://www.contextstudios.ai/blog/deepseek-v4-the-open-source-pricing-earthquake ↩ ↩2 ↩3 ↩4

-

HuggingFace DeepSeek V4 Blog — Checkpoint sizes, deployment guidance: https://huggingface.co/blog/deepseekv4 ↩

-

DeepSeek API Docs — Official pricing, V4 preview release: https://api-docs.deepseek.com/news/news260424 ↩

-

Reuters — DeepSeek 75% first-week V4-Pro discount, cache-hit prices cut to 1/10th: https://www.reuters.com/world/china/chinas-deepseek-slashes-prices-new-ai-model-2026-04-27/ ↩

-

MiniMax M2.7 License — Non-commercial license, weights released 25 days after API, community backlash on HuggingFace: https://github.com/MiniMax-AI/MiniMax-M2.7/blob/main/LICENSE ; Release timeline: https://serenitiesai.com/articles/minimax-m2-7-open-source-self-evolving-benchmarks-pricing-2026 ↩ ↩2 ↩3 ↩4

-

GLM-5.1 Release Timeline — API March 27, weights April 7 (11-day delay), MIT license: https://z.ai/blog/glm-5.1 ↩ ↩2

-

Reuters — “DeepSeek withholds latest AI model from US chipmakers including NVIDIA,” February 25, 2026: https://www.reuters.com/world/china/deepseek-withholds-latest-ai-model-us-chipmakers-including-nvidia-sources-say-2026-02-25/ ↩

-

My Written Word — R2 training failures on Ascend 910C, software stack maturity issues: https://mywrittenword.com/2026/04/05/deepseek-v4-huawei-ascend-chips-moe-architecture-export-controls-2026/ ↩ ↩2 ↩3

-

Tom’s Hardware — Ascend 910C test report: approximately 60% H100 inference performance: https://www.tomshardware.com/ ↩ ↩2

-

Parameter.io — NVIDIA stock impact, $50B China AI market data: https://parameter.io/nvidia-nvda-stock-dips-as-deepseek-v4-opts-for-huawei-over-american-chips/ ↩ ↩2 ↩3

-

Yahoo Finance — China chip stocks surge on V4 release: SMIC +9.4%, Hua Hong +13%: https://finance.yahoo.com/sectors/technology/articles/deepseek-unveils-v4-models-lifts-164531615.html ↩

-

The Next Web — Jensen Huang warns “horrible outcome” on Dwarkesh Podcast: https://thenextweb.com/news/nvidia-huang-deepseek-huawei-chips-horrible-outcome ↩ ↩2 ↩3

-

Neural Network World — ByteDance/Alibaba 950PR sampling, 750,000 unit production, ecosystem fork analysis: https://neuralnetworkworld.com/deepseek-v4-to-run-on-huawei-chips-sidelining-nvidia/ ↩ ↩2

-

NVIDIA Developer Blog — Day-0 Blackwell support, 3,500 tokens/sec on V4: https://developer.nvidia.com/blog/build-with-deepseek-v4-using-nvidia-blackwell-and-gpu-accelerated-endpoints/ ↩ ↩2

-

World Today News — MLPerf Training v3.1: 89% H100-equivalent throughput on Ascend, 40% lower power: https://www.world-today-news.com/deepseek-v4-on-huawei-hardware-a-new-global-ai-standard/ ↩

-

Pulse Mark — CUDA ecosystem dependency, CANN alternative, ecosystem fork risk analysis: https://pulsemark.ai/deepseek-v4-release-multimodal-huawei-cuda/ ↩